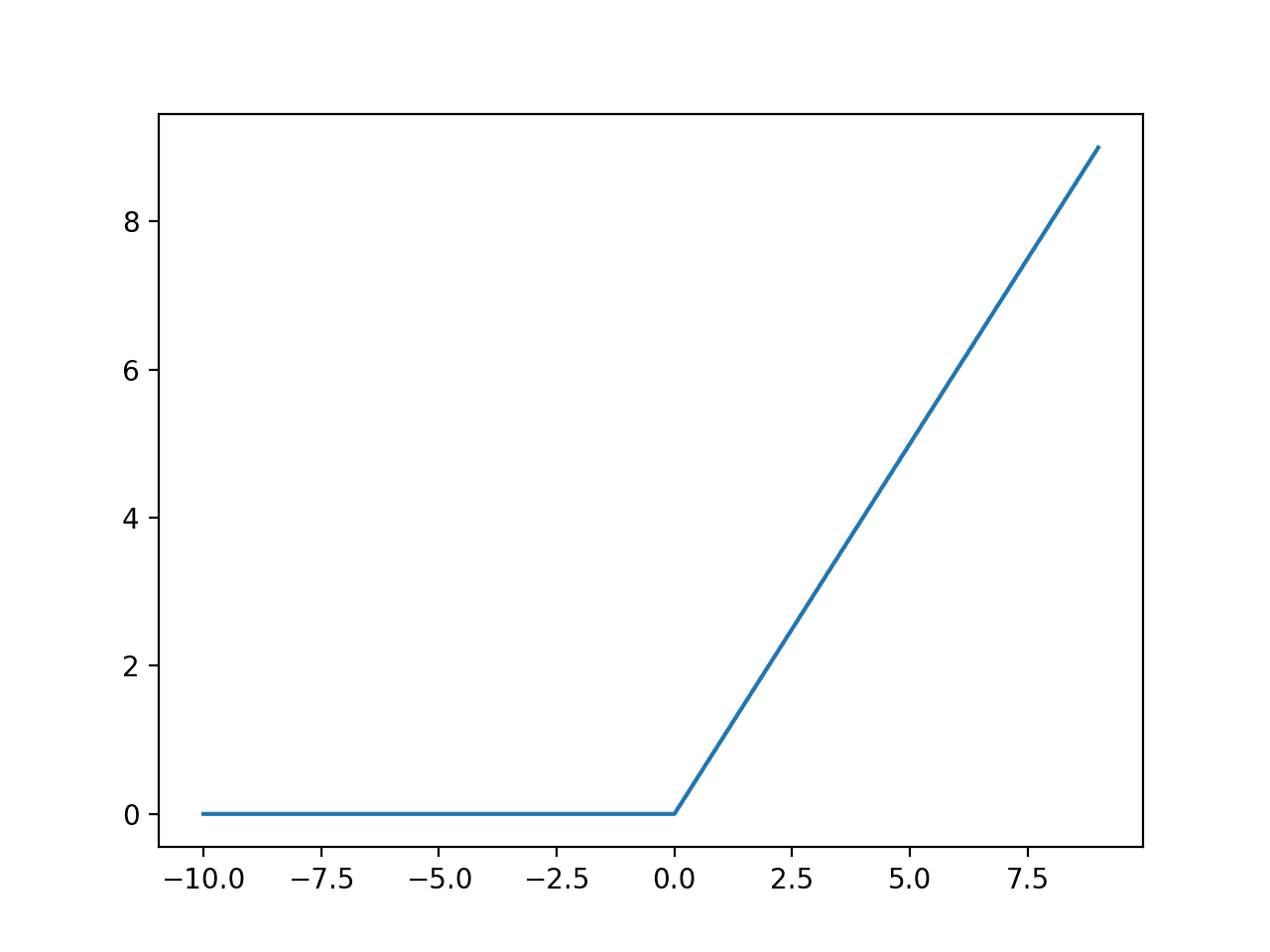

The ReLU activation function

The most popular activation in deep learning is a classical object in disguise

ReLU has a modern-sounding name, but the function itself is old.

It is defined as

This is one of the most popular activation functions in deep learning, and it is probably the most studied among mathematicians because it is closely related to well-known mathematical objects.

If you have studied real analysis, convex optimisation, or even a bit of PDEs, you’ve likely met it under a different alias:

the positive part,

the ramp function,

a one-sided hinge.

Today, I want to collect a few properties of the ReLU that are worth remembering and connect them to “classical” functions such as the absolute value, the binary maximum and minimum, the Heaviside step, and splines.

1) The three properties that explain 80% of ReLU

Piecewise linear (with one kink)

ReLU is affine on each side of 0:

if x<0, ReLU(x)=0,

if x>0, ReLU(x)=x.

So it is linear except at a single point. That single kink is a surprisingly powerful building block.

Monotone and 1-Lipschitz

ReLU is non-decreasing, and it never stretches distances by more than a factor of 1:

Entrywise ReLU on vectors inherits the same idea: it is non-expansive in the sense that it can only reduce (or preserve) coordinate differences, never amplify them. The 1-Lipschitz property is typical of several other commonly used activation functions.

Convex

The ReLU function is convex. Geometrically, this can be seen by noticing that any pair of points on its graph is connected by line segments that do not go below the graph.

Another way to verify its convexity is by noticing that it is the maximum of two convex functions: f1(x)=0 and f2(x)=x. The convexity of ReLU then follows by recalling that the maximum of convex functions is again convex (see this question, for example).

2) ReLU and the absolute value

A classical identity is:

ReLU lets you package that into a clean decomposition:

Even nicer:

So you can think of ReLU as “half of x plus half of its magnitude”.

This is why ReLU often shows up in convex analysis: |x| is the prototype non-smooth convex function, and ReLU is one of its simplest one-sided pieces.

3) ReLU is a max-machine

ReLU isn’t just a function. It is a small toolkit for building other piecewise-linear operations.

The most useful identity is:

We can easily verify this identity:

If y is not larger than x, then the ReLU term vanishes. We hence recover x, which is the maximum among them.

If y>x, we have ReLU(y-x)=y-x, hence recovering x+y-x=y on the right-hand side, which is their maximum.

Similarly,

Once you see these, it becomes hard to unsee them.

Based on these identities and on the max-min representation of piecewise affine functions, one can show that any continuous piecewise affine function can be represented by a deep ReLU network. If you are interested in the proof, let me know in the comments, and I can discuss it in a later post.

4) Derivatives: the Heaviside step shows up

ReLU is continuous, but not differentiable at 0.

Away from 0, the derivative is simple:

That piecewise-constant derivative is the classical Heaviside step function (up to what you decide to do exactly at 0).

So, in a very literal way:

ReLU is an integral of a step,

and a step is a derivative of the ReLU function.

5) Why do networks become piecewise affine?

A layer of a feedforward neural network typically looks like

where the activation function sigma is applied entrywise.

If

then each neuron introduces a hyperplane “threshold” of the form

On one side, you get output 0; on the other, you get an affine transformation of the input. This occurs in every neuron of every layer.

Stack enough of these, and you do not get a mysterious black box.

You get a function that is:

continuous,

and affine on each region of a polyhedral partition of space.

In other words: a big piecewise-affine object assembled out of hinges.

Closing thought

ReLU is popular partly because it works well in practice.

But mathematically, the real reason it keeps reappearing is simpler:

It is one of the cleanest non-smooth convex functions you can write, and it is the smallest “hinge” you can use to assemble piecewise-linear structure.

Modern name, classical object.

Let me know what you think about this post, and consider sharing it and subscribing to my Substack: