Linear stability: when your solver invents energy

A damped oscillator is supposed to die out — so why do some numerical methods make it explode?

There is a simple promise behind damping:

If friction is present, the oscillations should shrink.

So when a simulation of a damped system starts growing, it is not “a small numerical error”. It is a qualitative failure: your solver is injecting energy into a system that should be losing it.

This post is an intuition-first introduction to linear stability for ODE solvers, using a prototype example: the damped harmonic oscillator. If you prefer to watch a video where I covered similar topics, you can find it here:

The simplest system that still misbehaves

Consider the second-order ODE:

Here, q(t) is the position, and gamma is the damping coefficient.

Introduce the velocity v(t), defined by:

Pack the state into a vector:

Then the system becomes linear:

Because it is linear, we know the exact solution:

So why talk about numerics here at all?

Because this is the cleanest place where you can see a phenomenon that becomes unavoidable in bigger models: some methods need a step-size restriction to preserve qualitative behaviour.

Three solvers, three personalities

Let h>0 be the time step and let x_n approximate x(t_n), with t_n = n h.

For linear systems, each method is an “update matrix” applied to x_n.

Explicit Euler

This is the simplest method: one evaluation of the vector field, no linear solves, no fuss.

Implicit Euler

Here, you must solve a linear system every step. More work, but (as we will see) more forgiving stability.

Runge–Kutta of order 4 (RK4)

For linear systems, RK4 can be written as a polynomial in hA:

Think of it as a higher-order approximation of the matrix exponential update.

So far, everything sounds reasonable. The interesting part is what happens when we vary gamma and h.

The one-step lens

Linear stability is easiest to understand through a “one-step amplification” viewpoint.

Start from the scalar test equation:

Its exact update over one step is:

When the real part of lambda is negative, the true solution decays. That decay is the contract.

A numerical method replaces ehλ with some function R(h lambda):

The method is (linearly) stable at z if:

That set of z values is the method’s stability region.

For the three methods above, the corresponding stability functions are:

- Explicit Euler:

- Implicit Euler:

- RK4:

This is the core idea:

A solver is stable if each step acts like a contraction on the modes that should decay.

Why eigenvalues show up (and why stiffness hurts)

For the system x’ = A x, the m odes are controlled by the eigenvalues of A.

They are:

Three regimes fall out immediately:

- gamma < 2: complex conjugate eigenvalues, oscillatory decay

- gamma = 2: repeated real eigenvalue (critical damping)

- gamma > 2: two distinct negative real eigenvalues (overdamped)

Now comes the numerical punchline.

A method is stable for this problem at step size h if both scaled eigenvalues lie inside the method’s stability region:

When gamma becomes large, one eigenvalue becomes very negative: a very fast-decaying mode appears.

That is the simplest version of stiffness: the dynamics contain both

- a slow, visible time scale, and

- a fast, rapidly decaying time scale

and explicit methods are forced to resolve the fast one (via a small h) just to avoid blowing up, even if you do not care about that mode’s detailed shape.

Implicit Euler behaves differently: its stability region contains the entire left half-plane. So it stays stable even when

is far to the left.

Stable does not mean accurate — but it does mean “no invented explosions”.

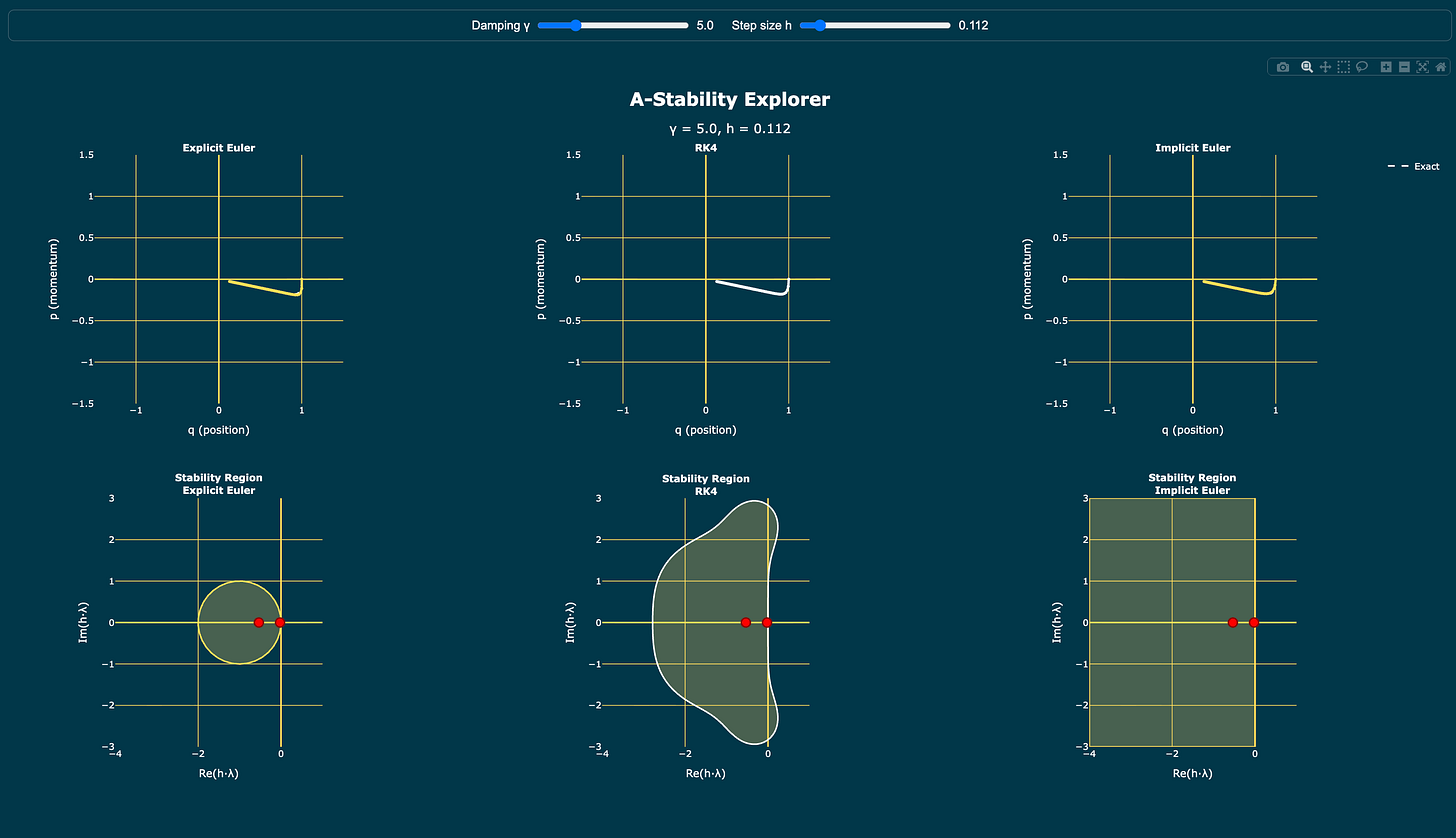

How to use the stability explorer

In the interactive explorer (the one shown in the video), you can move two sliders:

- damping gamma

- step size h

At the top, you see phase portraits: the exact trajectory compared with Explicit Euler, RK4, and Implicit Euler.

At the bottom, you see the stability regions for each method, and two red points corresponding to the scaled eigenvalues:

The rule of thumb is immediate:

If a red point exits a method’s stability region, that method can start producing qualitatively wrong behaviour (including blow-up) even though the true solution decays.

Closing thought

Linear stability is not about getting the fourth decimal right.

It is about not breaking the physics.

In this example, the story is: damping removes energy.

An A-stable method respects this behaviour for every h>0.

If this post helped you build intuition for stability regions and stiffness, consider subscribing and sharing Mathone on Substack: